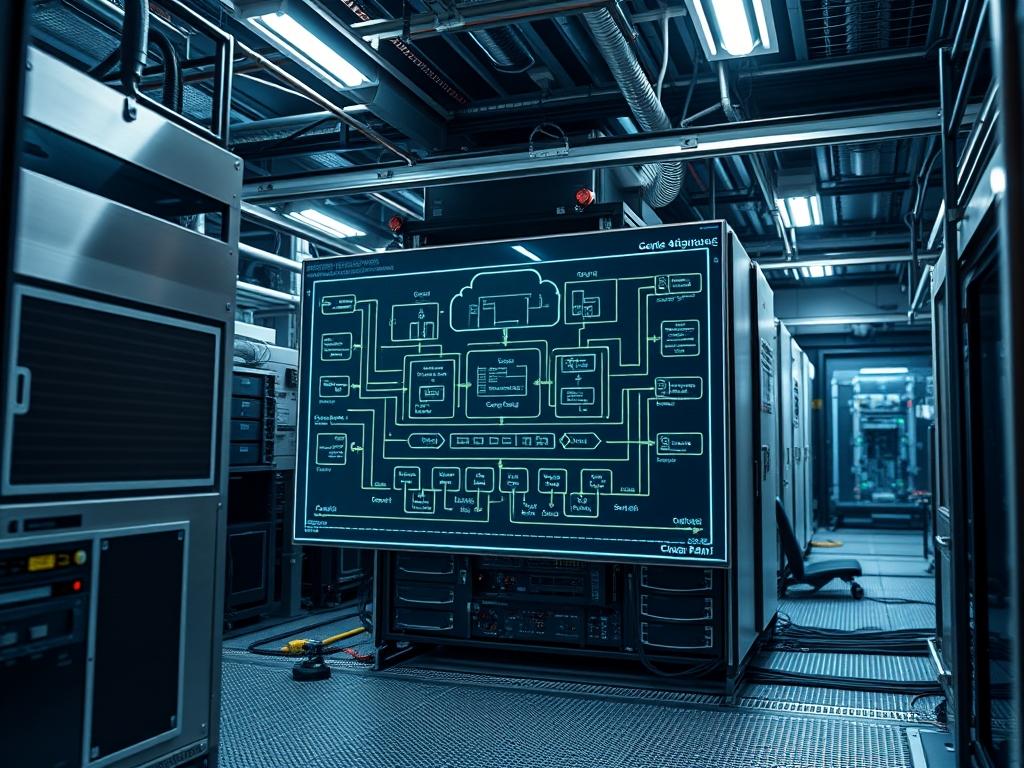

CloudStack Host Management represents the foundational orchestration layer where virtualized compute resources interface with physical hardware. Within a modern technical stack; whether it supports global telecommunications, municipal water sensor arrays, or high-density cloud compute; the host acts as the primary execution engine. It converts high-level API requests into low-level machine instructions. Effective management ensures that the hypervisor layer communicates seamlessly with the Management Server to maintain high availability and resource balance. The problem often encountered is the disconnect between the physical provisioning of a server and its logical integration into a CloudStack Pod. This manual provides a rigorous framework for adding these assets while mitigating risks associated with packet-loss, latency, and resource overhead. By focusing on idempotent configuration practices, administrators can ensure that each added node adheres to strict architectural standards, reducing the manual effort required for scaling complex infrastructures.

Technical Specifications

| Requirement | Default Port/Operating Range | Protocol/Standard | Impact Level (1-10) | Recommended Resources |

| :— | :— | :— | :— | :— |

| Management Heartbeat | Port 8250 (TCP) | Custom Binary/TCP | 9 | 1 Gbps Dedicated Link |

| Hypervisor Access | Port 22 (SSH) / 16509 (Libvirt) | SSH / TCP | 7 | Low Latency Path |

| Storage Connectivity | Port 2049 (NFS) / 3260 (iSCSI) | NFS/iSCSI/CEPH | 10 | 10 Gbps+ Throughput |

| CloudStack Agent | /var/log/cloudstack/agent | Java 11/17 Runtime | 8 | 2GB Reserved RAM |

| VNC Console | Port 5900 – 6100 | RFB Protocol | 5 | Integrated GPU/ASIC |

Environment Prerequisites:

Ensure the target server is running a supported Linux distribution such as RHEL 8/9, Rocky Linux, or Ubuntu 20.04/22.04. The hypervisor of choice, typically KVM for open-source deployments, must have hardware virtualization (VT-x or AMD-V) enabled in the BIOS/UEFI. Network dependencies include a functional Bridge (e.g., cloudbr0) configured via nmcli or netplan. User permissions must permit root or sudo access for the installation of the cloudstack-agent package. Furthermore, ensure that SELinux is set to permissive or properly configured with the necessary boolean values to allow hypervisor-to-management communication.

Section A: Implementation Logic:

The logic of CloudStack Host Management relies on the encapsulation of physical compute capacity into a manageable logical unit. When a host is added, the Management Server negotiates a handshake with the local agent. This agent acts as a translator between the CloudStack global state and the local libvirt daemon. This architecture ensures that the Management Server does not need to understand the nuances of the underlying hardware; instead, it relies on the agent to provide an idempotent interface for VM lifecycle operations. This design minimizes overhead and separates the control plane from the data plane, ensuring that a management failure does not result in guest downtime.

Step-By-Step Execution

1. Configure the Repository and Install the Agent

yum install cloudstack-agent or apt-get install cloudstack-agent

System Note: This command pulls the necessary binary and Java dependencies. It populates the /etc/cloudstack/agent/ directory with configuration templates. This action modifies the file system hierarchy to include the specific service units required by systemctl.

2. Configure Libvirt for Remote Access

Edit /etc/libvirt/libvirtd.conf to set listen_tls = 0 and listen_tcp = 1.

System Note: Modifying these variables changes the socket binding of the libvirtd process. It allows the CloudStack Management Server to issue commands to the hypervisor via the TCP port rather than relying solely on local Unix sockets, which is essential for remote orchestration.

3. Initialize the Network Bridge

nmcli con add type bridge ifname cloudbr0 followed by nmcli con add type bridge-slave ifname eth0 master cloudbr0

System Note: This creates a Layer 2 software bridge. By attaching the physical interface (eth0) to the bridge (cloudbr0), the system enables the hypervisor to inject virtual machine traffic directly into the physical network segment. This minimizes signal-attenuation in virtualized environments by bypassing unnecessary NAT layers.

4. Edit the Agent Properties

Modify /etc/cloudstack/agent/agent.properties and define the host (Management Server IP).

System Note: This file serves as the primary configuration payload for the agent. It dictates where the agent should register itself. Updating the guid and host variables ensures the Management Server can uniquely identify this hardware asset among hundreds of others.

5. Restart Services and Verify Connection

systemctl restart libvirtd followed by systemctl restart cloudstack-agent

System Note: Restarting these services triggers a kernel-level reload of the associated drivers and listeners. The cloudstack-agent will attempt to establish a persistent TCP connection to the Management Server. Check the status using systemctl status cloudstack-agent to confirm the process is active.

Section B: Dependency Fault-Lines:

Software conflicts frequently arise from mismatched Java versions or incompatible libvirt releases. If the host fails to enter the “Up” state, verify that the qemu-kvm hardware acceleration is functional by running kvm-ok. Another common bottleneck is the firewall. If iptables or firewalld is blocking port 8250, the agent will heartbeat successfully but fail to receive task payloads. Ensure that the bridge-nf-call-iptables sysctl setting is tuned to correctly handle traffic passing through the virtual bridges to avoid silent packet-loss.

Section C: Logs & Debugging:

When a host stays in the “Connecting” or “Disconnected” state, the first point of audit is /var/log/cloudstack/agent/agent.log. Look specifically for “StartupAnswer” or “Link dropped” strings. A “Link dropped” error usually indicates a network timeout or high latency on the management network. If the log shows “Permission denied” when attempting to start a VM, use ls -l /dev/kvm to verify that the cloud user has the necessary read/write permissions to the hardware acceleration device.

For deeper inspection of the hypervisor-management communication, use tcpdump -i eth0 port 8250. This allows the auditor to see if the encapsulated packets are reaching the interface or if they are being discarded due to incorrect MTU settings or signal-attenuation on the physical wire. If visual cues in the CloudStack UI show a red status icon, cross-reference the host’s UUID with the records in the host table of the cloud database using mysql -u cloud -p.

Optimization & Hardening

Performance Tuning:

To maximize throughput and minimize latency, administrators should tune the concurrency settings within the agent.properties file. Specifically; increase the workers count to match the number of CPU cores available for management tasks. Adjust sysctl parameters like net.core.rmem_max and net.core.wmem_max to handle larger network payloads without buffer overflows. In environments with high thermal-inertia, ensure the host’s power management policy is set to “Performance” in the BIOS to prevent frequency scaling from impacting operation latency.

Security Hardening:

Security is paramount. All communication between the host and the Management Server should ideally be wrapped in a dedicated Management VLAN to isolate it from guest traffic. Use iptables to restrict access to port 22 and port 16509 so that only the Management Server’s IP address can initiate a connection. Disable the loading of unnecessary kernel modules to reduce the attack surface. Furthermore; use chmod 600 on all configuration files containing sensitive credentials or UUIDs.

Scaling Logic:

When scaling to a multi-rack deployment, use the “Zone-Pod-Cluster” hierarchy. Each Cluster should contain hosts with identical hardware profiles to ensure that VM migrations are idempotent. As the number of hosts grows; monitor the Management Server’s CPU and memory usage; if the overhead exceeds 60 percent, consider deploying additional Management Servers in a load-balanced configuration to manage the increased heartbeat traffic.

The Admin Desk

How do I fix a host stuck in the “Routing” state?

This indicates the Management Server cannot reach the agent. Check the cloudstack-agent service status and verify that port 8250 is open on the Management Server. Ensure no firewall is dropping the outbound packets from the host.

Why is my KVM host failing to add via the UI?

The most common cause is an SSH authentication failure or a missing libvirt dependency. Ensure the Management Server’s public key is in the host’s /root/.ssh/authorized_keys file and that libvirtd is listening on TCP.

What causes “insufficient capacity” errors when adding a host?

This is often a logical error in the CloudStack database or a mismatch in the “Reserved System RAM” setting. Ensure the host has enough unallocated memory and that the Primary Storage is successfully mounted on the host via NFS/iSCSI.

How can I verify the agent is communicating with the server?

Tail the agent log using tail -f /var/log/cloudstack/agent/agent.log. Look for “Sending: Seq…”. This confirms the agent is pushing its state to the Management Server. If you see “Connection refused”, check the Management Server IP configuration.

How do I handle a host that won’t enter Maintenance Mode?

Maintenance mode fails if VMs cannot be migrated. Check the “MIGRATE” status in the log. If migration fails due to storage latency or packet-loss; manually stop the VMs or investigate the throughput of the Primary Storage network.